Two inspired keynotes today about the vast new possibilities that machine-readable data – or more precisely: data that machines can act on - open up for the advancement of science. There is so much (digital) data out there that no human can comprehend it all. Fortunately, we have or are developing tireless machines that can publish, merge, search, reason, predict and integrate information. They can establish relationships between fields of science which have never even contemplated getting together and make way for new cross-disciplinary work.

Herbert van de Sompel, at right, with yesterday’s keynote speaker Rick Luce.

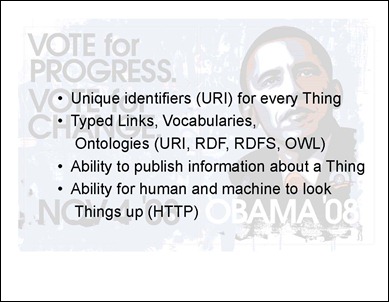

It was, of course, Herbert van de Sompel of Los Alamos who treated the audience of 400 research librarians to a peek into this fascinating world of emerging research possibilities (slides available from slideshare). All based on some rather basic building blocks:

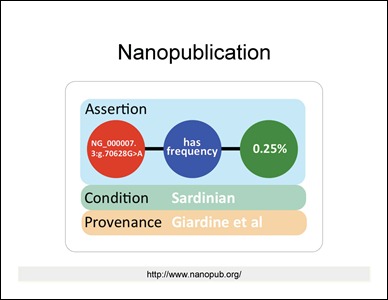

Enthousiastically Van de Sompel reviewed some of the projects to make all of this possible, starting with his own OAI Object Reuse and Exchange, Open Annotation, and Memento, and then on to other developments, such as the ‘nano publication’, the smallest entity of information that can be searched, merged, read, etc. etc. by machines – a somewhat extended version of an RDF triple. And what to think of Executable Papers – articles that include the software and underlying data so that the reader can repeat the original experiments and draw his own conclusions.

Mind boggling! Van de Sompel explained that this is what we need to make it all happen, ‘and it irks me that we have that at our fingertips’:

- open access to all data

- permissive (i.e., non-restrictive) creative commons licences

- money to pay for these tools

- persistence in identifying the objects – and that is still a challenge (see last week’s post)

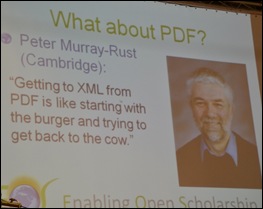

From a digital preservation viewpoint, however, there is a complication. As Alma Swan of Enabling Open Scholarship explained a few hours later, it does not work with PDF. PDF was developed for humans to read. Machines cannot read PDF, they need XML.

Alma showed that universities are building digital repositories at great speed – there are now almost 2000 of them. But what are we filling them with? Mainly PDF’s … Because they are nice and robust from a preservation viewpoint. And humans can read them.

Alma Swan (seated) awaiting her turn to speak with session chair Bas Savenije.

There is good news as well. As Alma pointed out, digital repositories are attracting new users, mostly users from outside the university who do not have access to licensed digital content from major publishers. Companies, for instance, and private citizens who are making use of the new digital possibilities and getting involved in scientific efforts, such as these:

However, much of the material these new users are interested in, is not available in open access, and thus cannot be used by either humans or machines.

So what are we preserving all of the stuff for?

I’m going to sleep on that one …

Yours truly will spare no effort to tell you everything; here is my paparazzi shot at the VIP room: LIBER President Paul Ayris (left) and Executive Director Wouter Schallier.

Yours truly will spare no effort to tell you everything; here is my paparazzi shot at the VIP room: LIBER President Paul Ayris (left) and Executive Director Wouter Schallier.

1 opmerking:

Een reactie posten