There is a new PDF ISO standard, 19005-2, or PDF/A-2, and therefore the Benelux PDF/A Competence Center decided to organize a seminar. When one of the organizers, Dominique Hermans of DO Consultancy, asked me to do the warming-up presentation, I readily agreed, because I had been hearing some bad things about PDF these last few months, and was eager to find out more. While preparing my own talk (slides at the end of this post) I decided to quote those very criticisms (see LIBER2011 blog post), just to get the ball rolling and challenge the experts to comment:

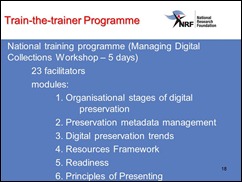

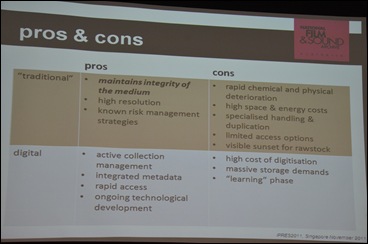

This slide of mine is a mash-up of three slides by Alma Swan at the LIBER 2011 conference, Open Access, repositories and H.G. Wells

These criticisms come from people who want machines to analyse large quantities of data in a semantic-web/Linked Data-type environment. Are the criticisms justified? For those of you who, like me, are sometimes confused about what is and what is not possible, I will summarize what the experts told the seminar.

The key one-liner came from Carsten Heinemann of LuraTech:

“PDF was designed as electronic paper”

‘It was designed to reproduce a visual image across different platforms (PC, Mac, operating systems), and for a limited period of time.’ As such, PDF was a really good product, because it was compact and complete and it allowed for random access. But there were also many issues, and Adobe has been working on fixing those ever since. This has resulted in an entire family of PDF formats with different functionalities.

PDF/A is the file format most suited for archiving purposes. The new standard, PDF/A-2 is not a new version of PDF/A-1 in the sense that one would need to migrate from 1 to 2, but rather a new member of the PDF family tree that has improved functionality over PDF/A-1. In order words: migrating from PDF/A-1 to PDF/A-2 is senseless, but if you are creating new PDF documents you may want to consider PDF/A-2 because of the new functionality to incorporate more features from the original document (e.g., JPEG2000 compression, possibility to embed one file into another, larger page sizes, support for transparency effects and layers).

To make matters more complicated, PDF-A/2 comes in two varieties. Compliance level 2a and compliance level 2b. Level a allows for more access by search engines such as used in semantic web techniques, because it requires that files do not only provide a visual image, but that they are structured and tagged and include Unicode character maps.

Heiermann concluded: XML is for transporting data; PDF is for transporting visual representations. To which I may add: XML is for use by machines, PDF is for use by humans.

Misuse of PDF is easy

Raph de Rooij of Logius (Ministry of the Interior) told his audience that one should not be too quick to say that something is “impossible” with PDF. A lot is possible, but you have to use the tools the right way – and that is where things often go wrong.

Raph demonstrated that most PDFs put online by government agencies do not meet the government’s own requirements for web usability – including access by those who are, e.g., visually impaired. “The many nuances of the PDF discussion often get lost in translation,” he said. The trick is to pay a lot of attention to organizing the work flow that ends in PDFs.

PDF is no silver bullet

Ingmar Koch, a well-known (blogging) Dutch public records inspector, has seen many examples of PDF misuse. “Public officials tend to think of PDF as a silver bullet that solves all of their archiving problems”. But PDF was never designed to include anything that is not static (excel sheets with formulas, movies, interactive communications, etc.).

From the left: Caroline vd Meulen, Ingmar Koch, Bas from Krimpen a/d IJssel and Robert Gillesse of the DEN Foundation.

From a preservation point of view, I heard some shocking case studies from public offices. An official will type the minutes of a council meeting in Word, make a print-out, have the print-out signed physically, then OCR the document and convert it to PDF for archiving. I dare not imagine how much information gets lost in the process. But then again, we all know that data producers’ interests are often different from archives’ interests. Public offices just want to make a “quick PDF” and not be bothered by all the nuances.

How about validation?

There is a lot of talk about “validating” PDF documents. First of all, PDFs are created by all sorts of software, and what they produce often does not conform to the ISO standards and is thus rejected by validators. Things get more confusing when validators turn out different verdicts. Heinemann explained: “That’s because some validators only check 30%, whereas some will check 80%. The latter may find something the first did not see.”

At the end of the day …

It seems that, indeed, there are millions and millions of PDFs out there that can only provide a visual representation and are no good when it comes to Linked Data and the Semantic Web. But PDF is catching up, including new features all of the time. I understand that we may even expect a PDF/A-3, which supports including the original file format in the digital object. Ingmar Koch did not seem to be too happy about such functionality. It would make his life as a public records inspector even harder. But from a preservation point of view, that just might be as close to a silver bullet for archiving as we will ever get.

Meanwhile, if you want to use PDF in your workflow, getting some advice from an expert about what type of PDF is appropriate in your case is called for!

Comments by Adobe

Adobe itself was very quick to respond to this blog post in an e-mail I found this morning. Leonard Rosenthol, PDF Architect, was not very pleased with the picture painted by the above workshop – as a matter of fact, he used the word “appalled”. He asserted that PDF and XML/Linked Data go very well together and that various countries and government agencies have already adopted a scenario that ‘presents a best of two worlds’. Here is his link to a recent blog post by James C. King that describes how it is done: <http://blogs.adobe.com/insidepdf/2011/10/my-pdf-hammer-revision.html.

That blog post is an interesting addition to the workshop results (confirming Raph de Rooy’s assertion that “nothing is impossible”), but it does not take away the fact that PDF is often misused. I would guess that is because it is complicated stuff. “Making a quick PDF” just does not do it. The recommendation to seek expert advice, therefore, stands!

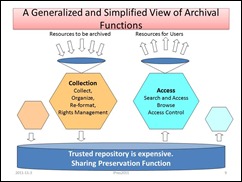

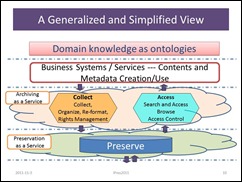

Lastly, here is my own presentation: a broad overview of developments in the digital information arena to start off the day – in Dutch:

For the Dutch fans: Ingmar Koch has blogged about this event here, and the slides will become available here. Thanks also to KB colleague Wouter Kool for helping me understand PDF.