It is Friday morning, 9 pm. In the other room they are talking about cost modelling. I have opted for the emulation session, continuing the thread from the KEEP workshop I blogged about last week. Judging by the number of participants, emulation is not yet a “hot” topic in the DP community. But perhaps it is only because it is Friday morning, the third conference day – people keep sneeking in with cups of black coffee in their hands.

The first paper conveys a really bright idea, which has also come up in the Netherlands (Maurice van den Dobbelsteen at the National Archives). If your job is archiving large amounts of data from a controlled environment (e.g., a government ministry), would it not be a great idea to simply make a virtual copy of the entire hardware/software environment in which the objects are produced? Then, when the data come into the archive, all you have to know is when they were produced and at which ministry, and your emulation environment is ready to go. That would save loads of work at the preservation stage.

A similar procedure could work if you want to harvest the archive of significant persons. For example, there was a project to emulate Salman Rushdie’s computer environment, to be able to access his files later.

Euan Cochrane (left) and Dirk von Suchodoletz

Euan Cochrane of the Archives of New Zealand and Dirk von Suchodoletz of the University of Freiburg present this approach at iPRES2011. They ran some tests and inevitably found some technical problems, but nothing that cannot be overcome. And I can imagine that, if it works, it can really be a time and money saver, especially in the long run. However, as always, there are challenges. Some are inherent to all emulation strategies: you need workable emulators and emulators themselves become obsolete – for which, of course, there is the KEEP approach which I blogged about earlier. Bram Lohman is presenting KEEP here in a minute.

One obstacle is unique to this particular approach: the data producers should include ‘emulatability’ in their calls for bids for computer systems. Technically this should not be too difficult, but in terms of licenses, there may be catches. I will blog about the copyright problems later. The KEEP project did a lot of work on that.

The next presentation raises the level of complexity a bit – at least for non-techies like me. It is entitled: “Using emulation as a tool for migration”, by Klaus Reichert et al.

Emulation developers: from the left Dirk von Suchodoletz, Klaus Reichert and Euan Cochrane

Because I did not understand it very well, I asked Klaus during coffee break. This is my layman’s version of how it works: almost every software programme comes with “little” migration tools, e.g., between Word 2003 and Word 2010. If you want a file that does not run on your present software/hardware combination and there is no direct migration tool, you can re-create the original environment (emulation), perform the “little” migration there, and then use the resulting file on your present system. The advantages are that you do not need to write new migration routines and you can use the file within your present context – without the old “handicaps”, if you will. See the slide below.

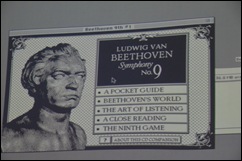

The case studies in this session include Mark Guttenbrunner, on home computer software emulation, Roman Graf & Reinhold Huber-Mork on braille conversion, and Geoffrey Brown (Indiana University), on emulating some interactive Voyager (1989-1997) publications on cd-rom, classic Mac applications such as Robert Winter’s Interactive Beethoven’s 9th (you could play themes, play notes, play synthesizer versions without certain rhythms, etc.). Let me give you the screenshot as a reminder of what we do all our hard work for (you have to imagine the music). You can find the technical details in the proceedings.

The case studies in this session include Mark Guttenbrunner, on home computer software emulation, Roman Graf & Reinhold Huber-Mork on braille conversion, and Geoffrey Brown (Indiana University), on emulating some interactive Voyager (1989-1997) publications on cd-rom, classic Mac applications such as Robert Winter’s Interactive Beethoven’s 9th (you could play themes, play notes, play synthesizer versions without certain rhythms, etc.). Let me give you the screenshot as a reminder of what we do all our hard work for (you have to imagine the music). You can find the technical details in the proceedings.

With vintage American pragmatism, Brown said: ‘Our goal is to demonstrate that emulation is practical’ – and that is what we all want. But he also said: ‘At the moment, a lot of this is hobby stuff.’ And there seems to be lot of that in the emulation environment. To really make it work we need more sustainable initiatives such as, perhaps, the Open Planets Foundation (OPF Director Bram van der Werf is in the room …).

With vintage American pragmatism, Brown said: ‘Our goal is to demonstrate that emulation is practical’ – and that is what we all want. But he also said: ‘At the moment, a lot of this is hobby stuff.’ And there seems to be lot of that in the emulation environment. To really make it work we need more sustainable initiatives such as, perhaps, the Open Planets Foundation (OPF Director Bram van der Werf is in the room …).

Geoffrey Brown answering questions.

Geoffrey Brown answering questions.

After this semi-live blogging session, we are off to lunch. This afternoon there is the workshop on International Alignment in Digital Preservation, in which I am involved myself. As it is Friday afternoon, the last conference day, and as we have competition from a session on web analytics and of organized visits to the National Library and National Archives, we are not expecting the greatest turnout. But quality can do a lot to make up for quantity ;-)

Expect a few more posts, though. Because of time constraints I had to forego some good stuff. I will report on that in the course of the next few days.

What to Wear is not only a ladies’ question here – air conditioning sometimes works too well for some of us.

What to Wear is not only a ladies’ question here – air conditioning sometimes works too well for some of us.

Geen opmerkingen:

Een reactie posten